The artificial intelligence debate is often discussed as two sided: the boosters and the doomers.

Those who think AI will bring upon abundance, or those who predict it ushers in catastrophe, and the two debate how necessary “AI safety” needs to be handled. But the “current harms” research movement argues something else: Most of the cheers and fears are predicated on over-enthusiasm that won’t come to pass. Instead, focus on what threats are here now.

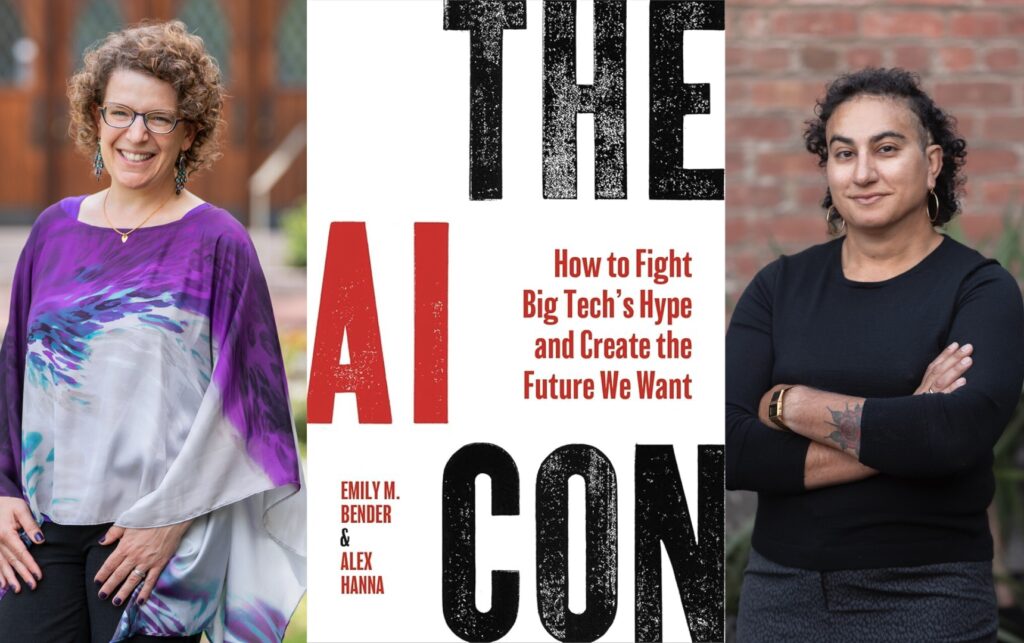

Thats from The AI Con: How to Fight Big Tech’s Hype and Create the Future We Want, the spring 2025 book by researchers Alex Hanna and Emily M. Bender.

In the same way I read futurist Ray Kurzweil’s book as plainly overly optimistic, this reads as overly caustic. As a tech journalist who has spent 20 years being hawked products, I understand, and am sympathetic, to their counterbalance of AI’s hype, but this book has suggests nothing redeeming. Probabalistic language tools used for light therapy are only suicide risks, they say, not an entry point for others. Stitching together many tools into “everything machines” are money-grabs, giving cover to an endless list of state and corporate harms.

I admire them, have followed their work and am closer to them than others in the field — I, too, struggle to believe the most extreme predictions from tech executives who have lied to me many times. Still, their ceaseless pessimism reads to me sometimes as if they’ve shut off everyone who is building in the technology. As they write with similar confidence as those they most criticize: “AI is not going to replace your job. But it will make your job a lot shittier.”

That said, the authors close their book saying they are not anti technology nor even pattern matching algorithms. They write: “We want technology that is created to strengthen and empower communities, not technology that reproduces and enables systems of oppression, consolidation of power and environmental devastation”

Below I share my notes for future reference.

My notes:

- “Systematic approaches to learning algorithms and machine inferences” or SALAMI is one of their teasing ways they describe the “marketing term” of AI

- AI is often better describing a series of automatons: decision making (bail), classification (photos), recommendations (social media), translation (license plate reader and cartoons from photos) and then text/image generation

- At the December 2012 NeurNIPS conference, Geoffrey Hinton sold his company and deep learning paper, with his two graduate students, at auction and Google won at $44m

- Minsky formed MIT AI lab while contemporary Joseph Weizenbaum created ELIZA hoping to show its limitations but instead became iconic

- Spell-checkers and radiology tools are focused tools (and sensible)

- In October 2017, a Palestinian construction worker was arrested by Israeli police after Facebook’s automatic translation service misinterpreted a photo caption, turning a friendly greeting into an alleged threat. The man, working in the West Bank settlement of Beitar Illit near Jerusalem, posted a picture of himself on Facebook leaning against a bulldozer, holding a cup of coffee and a cigarette. He captioned the photo in Arabic with a colloquial phrase often used for “good morning” or “good morning to you all,” reported as “??????” (yusbihuhum) or similar. Facebook’s artificial intelligence-powered translation service translated the caption as “attack them” in Hebrew and “hurt them” in English. Israeli police, who actively monitor social media for potential “lone-wolf” attacks, saw the translated text accompanied by a photo of a bulldozer—a vehicle used in previous terror attacks—and detained the man. Reports indicated that no Arabic-speaking officer checked the post before the arrest, with officers relying entirely on the automated translation. The man was released after a few hours of questioning once the mistake was realized.

- AI is “not going to make your job easier”

- Children learn through “intersubjectivity” — not TV but caregiver also engaged with same thing (including a TV)

- ChatGPT is “ synthetic text extruding machines” — just predicting next word, not connecting to meaning

- Dec 2022 Sam Altman tweeted “I am a stochastic parrot”, referring author Emily’s 2021 paper introducing the term: Altman implied that’s all humans do but authors argue there is deeper meaning to our words than these probability machines

- “ AI hype reduces the human condition to one of computability, quantification and rationality.”

- Timnit Gebru paper that got her kicked out of Google

- IQ tests originator wanted to just to find those in classrooms who needed help, not grade everyone

- But The Bell Curve (1994 book) and surrounding research drifted toward age old eugenics and race based theories (IQ tests are thought to be hugely culturally biased)

- “In the vast majority of cases, AI is not going to replace your job. But it will make your job a lot shittier.”

- “Robo taxis are best understood not as something from a maximally convenient, high-tech future, but rather as the end goal of big tech, squeezing all value out of a system that once provided a living wage for many.” [was ridesharing Lyft and Uber creating any broader community value or did they just subsidize rides to crash market share and then rise it again? Did they improve consumer experience?

- Time 2023 story on OpenAI hiring Kenya workers for $2 a day to review images and text

- ImageNet was built with 50k crowdworkers but only Hinton and Ilya got rich via their sale; Common that widespread labor is then a tool for others to extract wealth from

- Virginia Eubanks’s 2019 book “Automating Inequality”

- The Allegheny Family Screening Tool (AFST) in Pittsburgh is a predictive model used to score child neglect referrals, designed to aid in, rather than replace, investigative decisions. Critics and investigations suggest the tool can exacerbate racial disparities and contribute to family separation by prioritizing high-risk scores for investigations (rather than directing resources as it was pledged)

- Julian Angwin’s 2016 ProPublica investigation on COMPAS pre trial bail tool

- Large language models perform well like a clever Hans trick (telling users what they want to hear), they argue

- The Epic Sepsis Model (ESM) faced significant criticism for poor performance in clinical settings, with studies showing it missed roughly two-thirds of sepsis cases and caused high rates of false alarms, leading to alert fatigue.

- UnitedHealth Group and its subsidiary, naviHealth, were sued in a class-action lawsuit for allegedly using an AI tool called nH Predict to prematurely cut off care for Medicare Advantage patients. The lawsuit alleges this AI system was used to override human doctors and deny necessary rehabilitation stays in nursing homes and home health care, with an alleged 90% reversal rate of denials upon appeal.

- Does passing the bar exam have anything to do it with how helpful the tools would be in practice?

- Authors do not like ChatGPT therapy but is there ever an entry point use?

- Artist Karla Ortiz, artists want credit, consent and compensation

- Julie Ann Dawson told 404 Media upon closing her small publishing house “ the problem with AI is the people who use AI.”

- “Artistic practice is a social activity” but AI is antisocial

- Meta Galactic scientific paper failure, survived just three days

- Hiroaki Kitano: Nobel Turing challenge: This Scientist Thinks an A.I. Could Win a Nobel Prize by 2050

- Lisa Messeri and MJ Crockett: AI science risks devaluing fields that use less of the AI tools (such as those that rely on human interviews that cannot be done more “efficiently”)

- “Slower science is better science”

- Advise journalists to avoid non-peer reviewed papers, especially those from big tech “marketing in the guise of science”

- Maggie Harrison Dupre reporting was distributed by AI on Sports Illustrated and elsewhere from AdVon Commerce

- Craigslist and eBay in the 1990s, Google then Facebook crashed journalism especially local news — many ghost papers

- Karen Hao cited (this book), including that journalists need the little stories to get to the big stories

- Of journalism sustainability, authors cite philanthropic and reader revenue models like Defector and 404 covering AI

- AI boosters and doomers are “two sides of the same coin” — assuming the power and ignoring immediate pain

- Authors say the industry is “accelerating the real existential threat of human-made climate change”

- AI safety field did not emerge from systems safety engineering but fantasy around “alignment”. While systems safety engineering has recently begun to be applied to AI, the foundational “AI Safety” community was heavily shaped by futurologist, transhumanist, and sci-fi-influenced ideas about “superintelligence” in the 1990s and 2000s

- Brian Christian’s 2020 book The Alignment Problem

- Authors reject “that AI development is inevitable”

- The Asilomar AI Principles are a set of 23 guidelines developed in 2017 to ensure artificial intelligence benefits humanity, covering safety, ethics, and research, signed by thousands of experts. They emphasize building safe, transparent, and aligned AI that promotes shared prosperity while avoiding arms races.

- In response to late 2023 UK AI Safety summit Kamala Harris making connection between AI doomers (AI safety) and people like authors working on “current harms” (including environment) , authors say they do not want the connection: “the two fields start from different premises”.

- Authors say they start with civil and human riches, questions of racial and economic equity while the others focus on “fake scenarios”

- Authors citicize Andressen’s 2023 Techno Optimism Manifesto as an “anti safety” vision also because it reinforces that the AI safety people are the only other part of the discussion — but “the current harms” camp gets ignored and funded differently. (Andressen’s “accelerationists” or e/acc effective accelerationists”)

- Kurzweil: AI is “dual use” good or evil

- Timnit Gebru: TESCREAL, these boomer/doomer theory have roots in eugenics

- These AI tools are more like Bentham’s panopticon than nuclear weapons: “The danger is not from some hypothetical extinction level event. The danger emerges from rampant, financial speculation, the degradation of informational, trust and environments, the normalization of data, theft, and exploitation, and the data harmonization systems that punish the people who have the least power in our society by tracking them through pervasive policing systems. But the doomer/boosters would have us looking the other way from all these real harms, bedazzled by their dystopian/utopian visions.”

- Without an agreed-upon definition of intelligence, we fall back on overly specific and limited case like chess and standardized tests

- “The mind does not reside in the book”

- Alan Borning, Batya Friedman, Nick Logler: cloud computing is at odds with the materiality of these systems

- Michael Veale: not just how efficient AI is but how many and how competitive

- Sasha Luccioni: environmental effects of AI

- Big tech has admitted AI has made them miss their environment goals

- “AI doomerism isn’t worth taking seriously” (160)

- LLM skeptic Gary Marcus criticized authors for their criticism of the AI pause letter

- Authors call the 2022 blueprint for the AI bill of rights “well grounded” but distractions were added

- “We need to redirect attention away from speculative risks… To the actual harms being done now in the name of AI”

- In May 2020, Harrisburg University researchers claimed to develop AI software capable of predicting “criminality” from facial images with 80% accuracy and “no racial bias”. Intended for law enforcement, the study faced immediate backlash and was withdrawn after over 1,000 experts condemned it as pseudoscience and racially biased

- SoundThinking on ShotSpotter claimed accuracy

Authors suggest these questions to ask:

- What is being automated? What goes in, and what comes out?

- Can you connect input to output?

- Are the systems described as human?

- How is the system evaluated?

- Who benefits? Who is harmed, and what resources do they have

- How was the system developed and what are the labor practices?

- Garance Burke of the AP style book on AI; and Karen Hao with Pulitzer Center on AI training for journalists

- Advice: Troll bad GenAI content; go to original sources with “groundtruth” and invest in library science as “information access to be a public good”; existing regulation (the 2023 FTC chair Linda Kahn’s claim there is no “AI loophole” for consumer and nondiscrimination laws) and “zero trust” stance from EPIC (use existing law)

- LLMs aren’t good as advanced research (information access) both for reliability and because the friction of information gathering is part of learning (to judge the source)

- Library science Anna Lauren Hoffman and Raina Bloom: “access” at Google versus libraries

- Chuck Schumer report: driving US innovation in artificial intelligence

- In 2018 Oren Etzioni of Allen Imstitute said AI adoption needed to speed because it could save lives (driverless cars and legal support etc)

- Authors advocate that providers disclose more about models — Hugging Face (founded 2016 in NYC) facilitates this but most don’t use it

- Helsinki and Amsterdam pioneered public Artificial Intelligence Registers (AIRs) in 2020 to increase transparency and trust in city-deployed AI. These online registries, developed with Saidot, detail how municipal algorithms work, their datasets, human oversight, and potential risks, offering a “window” into AI usage.

- ELIZA-creator Weizenbaum wrote in his 1976 book Computer Power and Human Reason: From Judgment to Calculation: “The relevant issues are neither technological nor even mathematical; They are ethical. They cannot be settled by asking questions beginning with “can.” The limits of the applicability of computers are ultimately statable only in terms of oughts . What emerges as the most elementary insight is that since we do not now have any ways of making computers, wise, we ought not now to give computers tasks that demand wisdom.”

- According to the company, a 1979 IBM training presentation a slide read “a computer can never be held accountable, therefore a computer must never make a management decision”

- Authors argue for more labor unions

- Authors say they are not anti technology nor even pattern matching algorithms: “We want technology that is created to strengthen and empower communities, not technology that reproduces and enables systems of oppression, consolidation of power and environmental devastation”

- Reject “everything machines” marketed as general purpose technology

- Focused like Te Hiku Media’s maori language work

- Instead of the term “AI,” speak of automation and data collection

- Princeton professor Ruha Benjamin wrote in her 2022 book Viral Justice: How We Grow the World We Want.: We have to build the world as it should be to make justice irresistible. the world as it should be to make justice irresistible”

- The Luddites “ weren’t against technology, but they were against technology that did not serve them”

- Feminist Data Manifest-No is a declaration of refusal and commitment. It refuses harmful data regimes and commits to new data futures.

- Jenna Burrell the “ ever receding horizon of the future”

- The Carceral Tech Resistance Network (CTRN) is an abolitionist, knowledge-sharing coalition founded in 2018 to fight the design, testing, and deployment of surveillance and punitive technologies. It connects over 76 advocacy groups across the US, focusing on opposing electronic monitoring, facial recognition, and drones.

- Cory Doctorow: either bubbles leave nothing behind or something — the AI bubble will leave large models not cost effective, and so smaller better-scoped ones will remain

- What will happen to climate goals from big tech?

- “The residue of the bubble will be sticky, coating creative industries with a thick, sooty grime of limitless tech expansionism. This is the fallout of venture capitalists and tech entrepreneurs not pausing to think about who would be caught in the blast radius” (195)